If you’re scaling a drone program from 10 aircraft to 500, the hard problem stops being aerodynamics. It becomes the ground energy loop: the charging infrastructure and operating standard that determines whether turnaround time, safety controls, and compliance behave the same way across every site.

This is written for fleet operations leaders and procurement owners who need a charging standard they can replicate across sites, shifts, and regions.

Here’s what you’ll get in this article:

how operational variance in charging workflows becomes scalability debt

a simple delay model to make energy operations CFO-visible

what “repeatable ground ops” look like, including minimum instrumentation

how to future-proof for multi-voltage fleets (including 18S–32S)

The goal is simple: help you build a ground energy loop that scales without turning ops into constant firefighting.

Drone fleet charging workflows at scale

Variance becomes “scalability debt” when fleets rely on manual, non-standard actions in the ground loop.

A pilot program can survive on heroics:

the one technician who knows the “right” charger settings

the informal rule of thumb for when packs are “safe enough” to redeploy

the custom workaround when a station throws a weird fault

At 500 aircraft, those workarounds become scalability debt—a compounding liability created by every manual, non-standard action in the ground loop.

In large fleets, the enemy isn’t average performance. It’s variance—because variance is what breaks schedules, inflates spares, and makes SLAs hard to price.

A practical example: Site A charges a pack at a conservative profile because an experienced tech “knows what works.” Site B bumps the current because they’re chasing turnaround. Nothing looks broken locally—until you compare the network.

Site A’s packs come off charge cooler but later.

Site B’s packs turn faster, but hit higher temperatures, trip protection more often, and require more manual checks.

That’s scalability debt in plain terms: one “reasonable” local tweak becomes a network-level spread in turnaround time, fault rate, and retirement decisions.

Quantifying operational variance in large-scale fleets

Operational variance is the spread between “how long this should take” and “how long it actually takes.” In energy workflows, it shows up as:

different charge profiles applied by different operators

inconsistent cooling and thermal staging time

uneven pack balancing health

station congestion and queueing

manual rework after faults

What counts as a “manual intervention” in charging ops

Here’s a simple way to make it CFO-visible.

A manual intervention is any step where an operator has to break the default flow to keep charging moving—changing settings, troubleshooting, re-running checks, handling paperwork, or recovering from a station fault. The more often this happens, the more your network’s throughput becomes unpredictable.

Example assumption model (illustrative):

Fleet grows from 10 → 500 drones.

Each flight cycle requires one energy turn (charge, swap, dock, or hybrid).

A “manual intervention” event (human reconfiguration, troubleshooting, paperwork, rework) happens in p% of cycles.

Each intervention costs t minutes of delay.

Then total delay per day scales roughly with: Delay minutes/day ≈ cycles/day × p × t

To put numbers on it (illustrative example):

Assume 1,000 energy turns/day across the network.

Each intervention costs 10 minutes end-to-end (diagnose → reconfigure → document → recover).

If you reduce interventions from 5% → 0.5%, you cut daily delay from 500 minutes → 50 minutes (saving 450 minutes/day).

If the value of one grounded drone-hour (lost dispatch window, rework, missed SLA buffer) is $100–$300, that’s roughly $270k–$810k/year in avoidable downtime value (450 min/day ≈ 7.5 hours/day; × 365 days). The point isn’t the exact dollar figure—the point is how fast small rates become big money at scale.

Even if p is “small,” the product becomes large when cycles/day explodes. And because interventions don’t distribute evenly, the true cost is not just labor minutes—it’s missed dispatch windows, knock-on queueing, and late deliveries.

This is the moment your ground energy loop becomes a throughput constraint, not a support function.

The practical question is how you drive p down without hiring heroics: guardrails that prevent ad hoc settings, profile control that eliminates drift, and minimum instrumentation that turns exceptions into a standard response instead of improvisation.

Once you can quantify variance, the next question is where it shows up on a P&L—because that’s where total cost of ownership becomes the real story.

The hidden TCO of proprietary vs. standardized infrastructure

When teams build proprietary ground infrastructure, the initial CAPEX can look controlled: “We’ll design our own charge station,” “We’ll build our own swap rig,” “We’ll write a quick configuration tool.”

The margin killer arrives later, in OPEX.

To make this visible, use an iceberg TCO model:

Cost layer | Proprietary / non-standard ground stack (typical exposure) | Standardized / modular stack (target outcome) |

|---|---|---|

Visible CAPEX | station hardware, custom fixtures, bespoke tooling | repeatable station modules, documented parts |

Configuration drift | site-by-site parameter variance, firmware fragmentation | controlled profiles, versioned releases |

Training cost | “tribal knowledge” ramp-up, specialist dependence | atomized tasks, role-based SOPs |

Spare parts | unique parts per region, long-tail SKU explosion | fewer SKUs, global commonality |

Maintenance | ad hoc fixes, low observability, reactive replacements | telemetry-driven maintenance, predictable schedules |

Compliance burden | inconsistent records, hard-to-audit process | audit-friendly logs and traceability |

Expansion friction | every new site is a mini-project | new site = copy/paste of an operating unit |

If your infrastructure requires “your best people” to keep it stable, it’s not scalable infrastructure—it’s a craft process. And craft does not survive global deployment.

If proprietary infrastructure creates drift, the antidote is repeatability: the same tasks, the same profiles, and the same evidence trail at every site.

Standardized charging infrastructure as a competitive moat

You don’t need a single flagship case study to extract the operational lesson: make the ground loop boring.

What separates a demo fleet from a replicable network is whether the “energy loop” behaves the same way in every location—regardless of who is on shift.

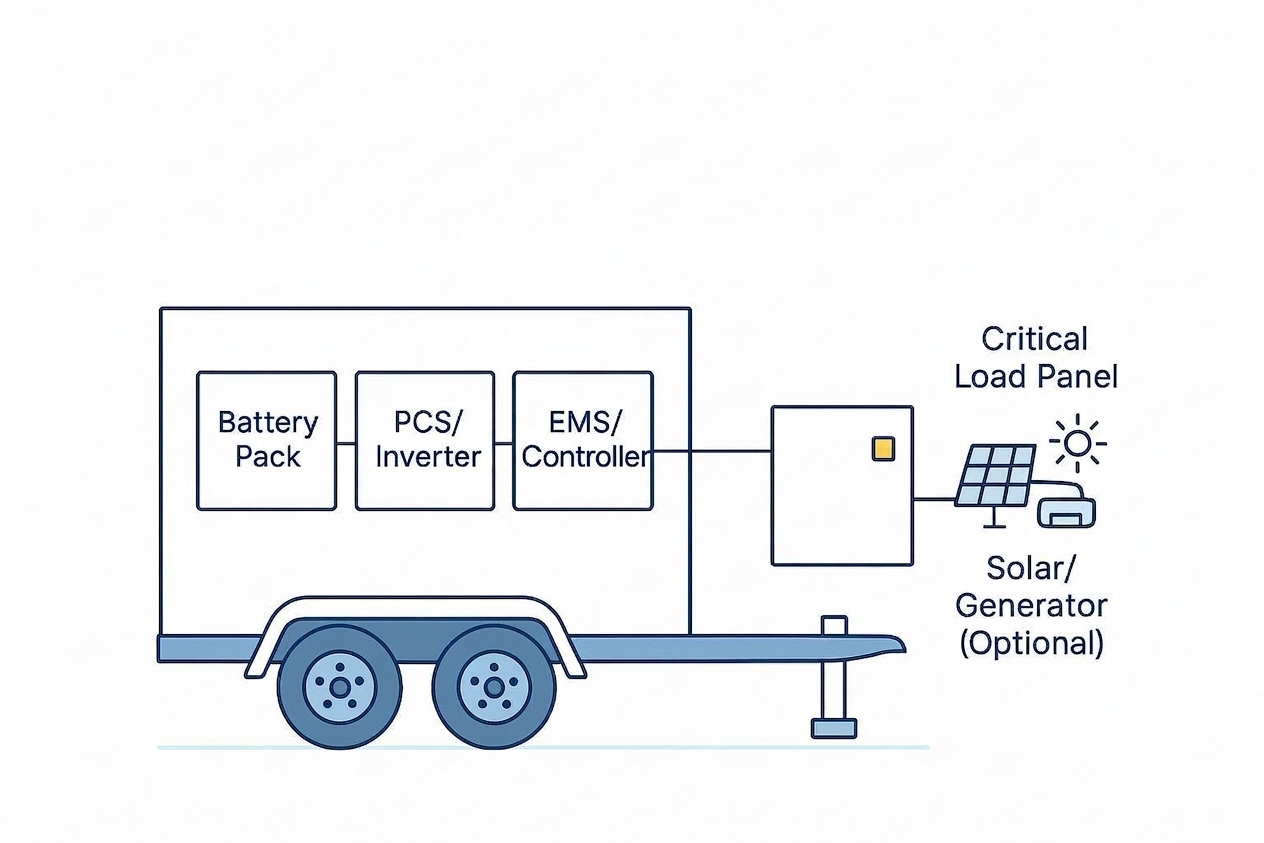

What repeatable ground ops look like in practice

Zipline’s public materials are a useful reference point for the question that motivates this article: what changes when a drone logistics network grows 50x? At that scale, the takeaway isn’t the aircraft—it’s the discipline of making ground operations repeatable (see Zipline’s official site and Zipline About). The rest of this section generalizes that idea into supplier-agnostic mechanisms you can apply to your own network.

Three mechanisms to look for in any Zipline-style network:

Replication unit: a site is treated as a copyable operating unit (same hardware, same SOPs, same training).

Variance control: charging, cooling, and handling steps are engineered so outcomes stay within tight bands across shifts and geographies.

Audit-grade instrumentation: every station produces the same evidence trail (profiles applied, exceptions, operator actions), so reliability is measurable—not anecdotal.

If you can’t describe your ground energy loop in those terms, scaling tends to turn into hiring heroics instead of building a machine.

From “craft” to “industrial process”: the ground-op revolution

The transition from pilot to network happens when you do three things:

Atomize the ground tasks

Break “charge and prep the drone” into discrete, testable micro-actions.

Each micro-action has a defined input, action, and pass/fail output.

De-skill the tasks

Design the workflow so a non-specialist can execute it safely.

If a task requires deep intuition, it belongs in engineering—not daily ops.

How to reduce interventions with guardrails and profile control

De-skill only works when the hardware and software are built for guardrails. For example, industrial chargers that support protocol matching and profile control can turn “set the right parameters” into “select the right battery and connect”—so operators aren’t improvising current limits under time pressure.

In practice, many operators look for high-power chargers with built-in safety logic (limits, checks, and standardized profiles) so “good charging behavior” becomes repeatable across sites.

Instrument the minimum viable ground loop

Instrumentation is what closes the loop: every station should produce the same evidence trail—what profile was applied, what exceptions occurred, and what the operator did next. Without that, you don’t have a scalable process; you have anecdotes.

At a minimum, log: profile ID/version, pack ID, start/stop timestamps, key telemetry (voltage, current, temperature), exceptions/fault codes, operator actions taken, and final outcome (pass/hold/retire). Standardize how faults are detected, classified, and responded to so shifts don’t invent new workflows under time pressure.

For compliance-first operators, this isn’t “nice to have.” It’s what makes audits, root-cause analysis, and cross-site reliability reviews repeatable.

If your expansion plan assumes you can hire enough specialists to keep manual energy workflows stable, you are budgeting for a bottleneck.

Infrastructure determinism: creating a predictable logistics engine

Logistics contracts are sold on outcomes:

on-time rate

uptime and coverage windows

safety record

incident response

Those outcomes depend on whether your infrastructure behaves deterministically:

A charge profile behaves the same way at every site.

Battery qualification and retirement rules are consistent.

Faults trigger standardized actions.

Turnaround time variance stays within a known band.

Determinism is what makes SLAs “financeable.” If the network’s variability is uncontrolled, you can’t price risk. If you can’t price risk, margins vanish.

As a battery supplier, we see the same pattern repeatedly: when charge profiles, handling steps, and exception rules aren’t standardized, fleets don’t fail because of one big defect—they bleed performance through a thousand small inconsistencies.

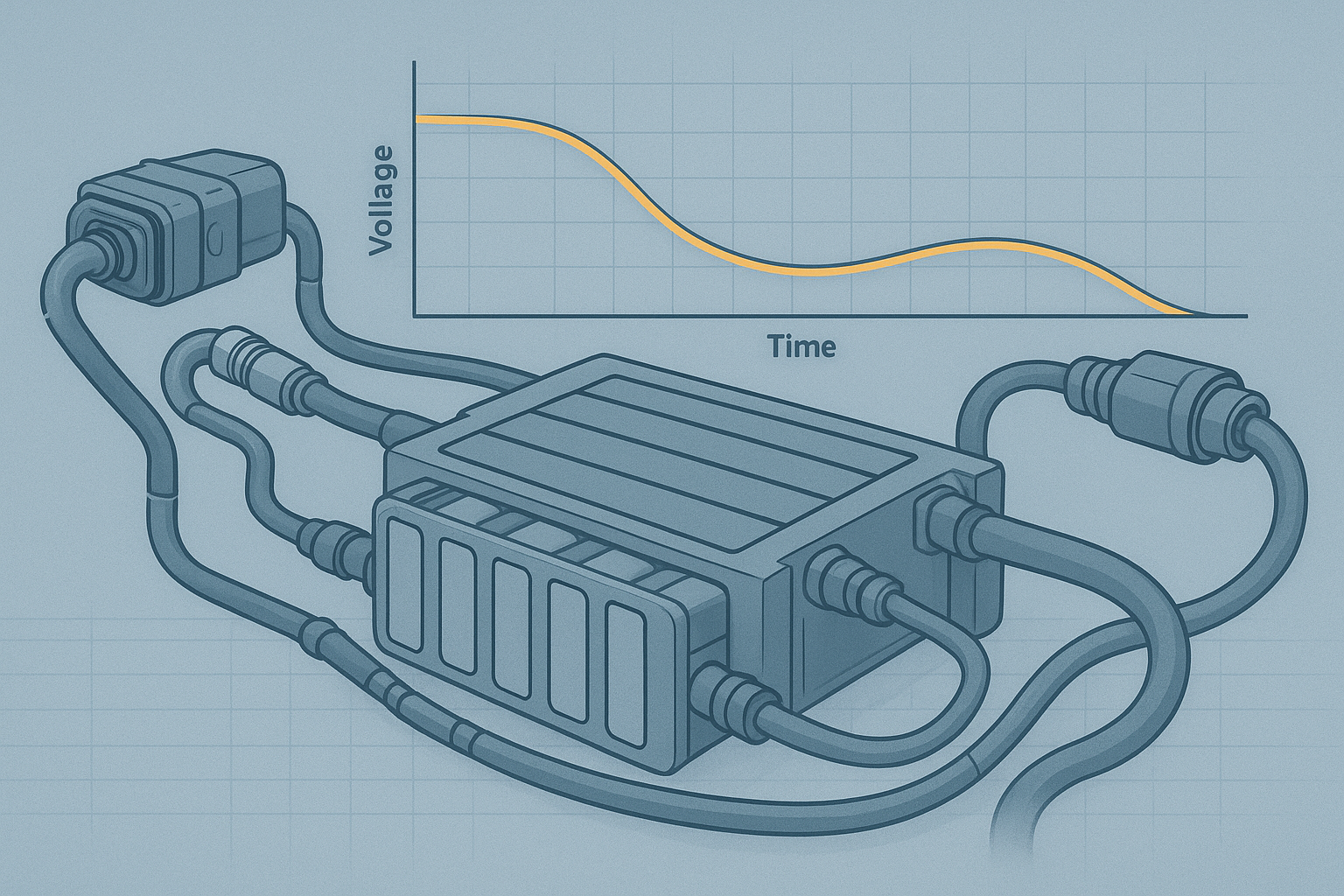

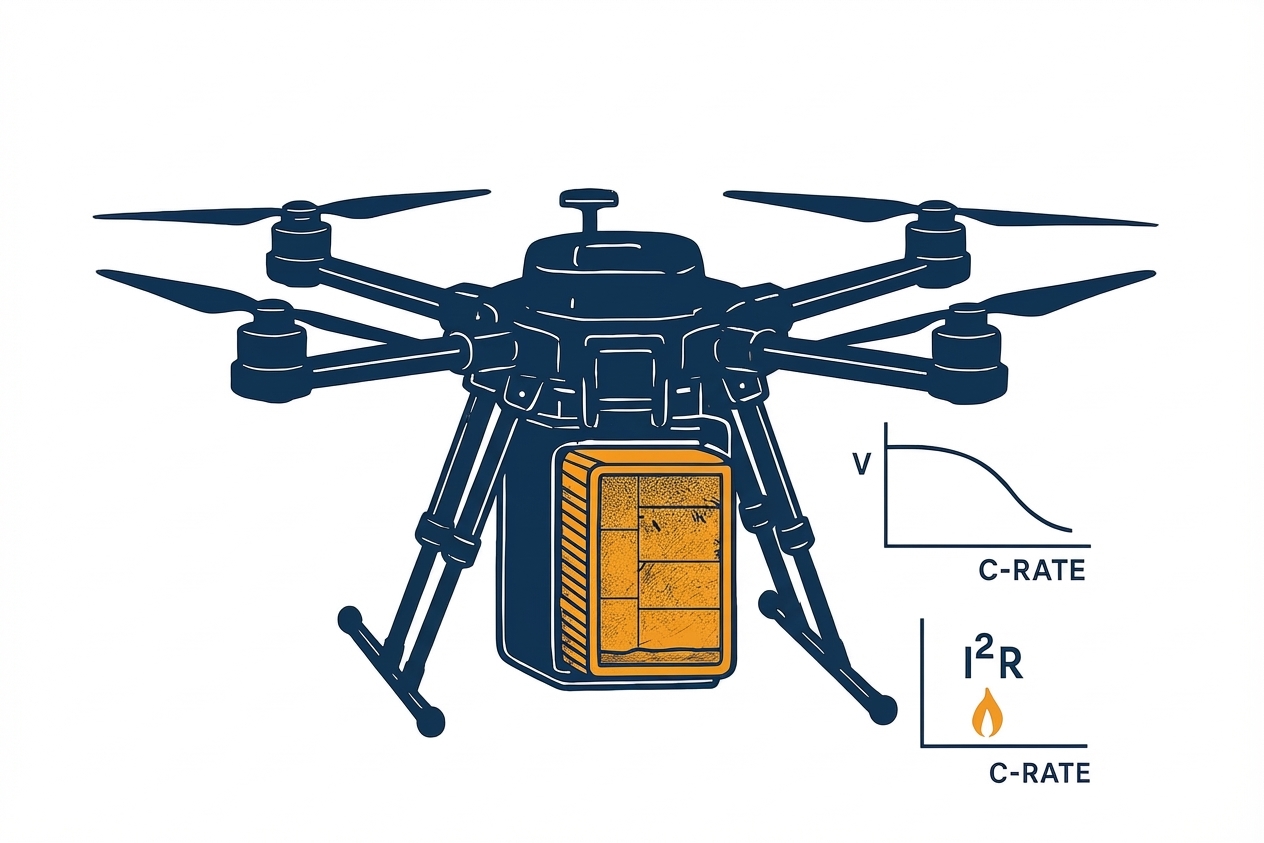

Charging strategy directly affects uptime and asset life, and small errors near full charge can compound risk in high-energy packs.

And when fleets scale, voltage sag isn’t a hobbyist problem—it’s an operations problem, because it triggers mission aborts, conservative buffers, and premature pack retirement (see Herewin’s criteria on drone battery voltage sag for industrial fleet reliability).

The future of interoperable networks: open standards vs. proprietary silos

If you believe drone logistics will be a global industry, then your energy layer needs to survive platform churn.

That means designing for interoperable charging infrastructure—not because “open is nice,” but because closed ecosystems create asset impairment risk.

In the EV charging world, the interoperability argument is explicit: proprietary protocols can strand infrastructure, while open standards reduce lock-in and increase upgrade flexibility (see the Open Charge Alliance’s Open Standards White Paper (2025) and BTC Power’s discussion of interoperability and stranded-asset risk (2025)).

Drone energy infrastructure is headed in the same direction.

Future-proofing via multi-platform compatibility

2026 is shaping up to be a real inflection point: moving from legacy pack architectures to higher-voltage platforms (including 32S) isn’t a minor spec bump—it’s a generational change in the “energy road” your network drives on.

If your ground infrastructure can’t span 18S–32S as a procurement requirement, every charger or station you buy today carries asset impairment risk: it may work for the current fleet, yet become the first thing you have to replace when the next aircraft class arrives.

Use compatibility as risk control:

support across multiple pack voltages and configurations (treat 18S–32S as a range requirement)

configurable, version-controlled charge profiles

traceable compliance documentation for transport and safety

ability to standardize station behavior across geographies

This is wide-voltage battery compatibility as risk control—not a spec flex.

For operators building standardized packs and workflows, vertical integration and compliance discipline are strategic. If you want examples of how suppliers frame this (certifications, traceability, ODM/OEM support), Herewin’s perspective is one reference point (see Herewin’s ODM/OEM one-stop battery solutions).

Building the global energy backbone

If you want a global drone logistics network, don’t start by “buying more aircraft.” Start by building roads. In drone logistics, the “roads” are your energy backbone:

standardized charging and handling

deterministic station behavior

audit-grade compliance and traceability

interoperability that protects capital over time

Aircraft performance wins demos. Infrastructure determinism wins renewals.

Drone fleet charging infrastructure checklist before you scale

This checklist is designed for procurement and ops leaders who need a charging stack that stays predictable as sites, shifts, and aircraft types multiply.

If Zipline-style growth is your goal, treat your energy stack like critical infrastructure. Before you standardize on a supplier, ask:

Can you support my fleet voltage roadmap end-to-end (e.g., 18S–32S) without forcing a charger replacement cycle?

Are charge profiles version-controlled (with approvals and rollback), or do settings drift site-by-site?

What telemetry and logs do I get by default (profile ID, pack ID, timestamps, faults, operator actions), and can I export them for QA/compliance?

How do you define and handle exceptions (thermal limits, imbalance, protection trips) so operators aren’t improvising?

What transport and safety documentation do you provide (e.g., UN38.3 where applicable and relevant product certifications), and how are records kept audit-ready?

How do you ensure pack-to-pack and batch-to-batch consistency across manufacturing sites?

These questions turn “battery sourcing” into a scalability decision: they determine whether your network behaves deterministically, or drifts as it grows.

Next step

If you’re planning to scale beyond a handful of sites, use this question as your gating metric:

How many interventions per 100 cycles does our energy workflow require today—and what happens when that number is multiplied by 50?

If you want a structured review of how your current ground energy loop maps to the replication, variance-control, and instrumentation mechanisms above, you can contact our team to walk through your requirements.